This blogpost is based on the paper “Economists Entered the ‘Numbers Game’. Measuring Discrimination in the U.S. Courtrooms, 1971-1989″ and a discussion I had with students on the recent Harvard discrimination case.

Students for Fair Admissions, an anti-affirmative action organization, sued Harvard in 2014 for discrimination in admissions against Asian American students. The trial ended last november, and both parties are expected to submit new documents in February 2019 before any decision would be delivered. Well-known economists produced contradicting testimonies for both sides of the adversarial process (defendant expert report can be found here, plaintiff one here). As reported by Eric Hoover in The Chronicle of Higher Education, famous labour economist David Card, representing Harvard, called another recognized labour economist Peter S. Arcidiacono’s analysis in support of the plaintiff’s “nonsensical”. The expert economists used broadly the same empirical methods, but followed different strategies and made different choices in reaching their conclusions. This exchange raises important questions: How can one trust economics as a field if economists call each other “nonsensical” in public? In addition to the individual credibility of economists and of economics as an area of knowledge, what’s at stake is economics’ public image. Such issue is not specific to economists’ expertise in courts and echoed many concerns over the role of expertise in the public sphere.

The debate on the present testimonies (in some specialized press and on econtwitter) parallels numerous elements within the history of economists as expert witnesses: experts are “hired guns” available for a price; judges are not experts in statistics or econometrics and don’t understand the subtleties of the testimonies; which side hires the expert explain the results presented, hence experts are interchangeable; courts decisions are based on many other criteria than just the expert witnesses’ testimony.

Excerpt of these discussion among economists can be found in this tweetstorm by Susan Dynarski (especially the discussion on the “personal ratings” variable) and subsequent answers, as well as, in this (short) thread on EconSpark.

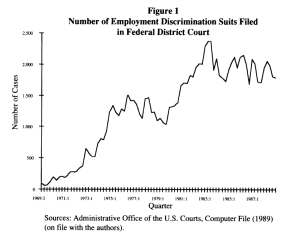

Economists are not new to expert witnessing. Nor are psychologists, sociologists, or social scientists in general, and social scientists using statistics in particular. Since the 1970s however, economists working on discrimination started responding to an increasing demand. The general growth of employment litigation since the 1970s, and especially the spectacular rise in the early 1990s went along with a rise in demand for expertise from the social sciences, and addressed, among others, by labour economists (See the quantitative analysis in Berrey, Nelson & Nielsen 2017, chapter 3, that continues the important contribution by Donohue & Siegelman 1991). Battles over University admissions (but also academic employment) developed along the same line: usually using the same quantification tools and same legal bases and doctrines.

Orley Ashenfelter and Ronald Oaxaca’s reflection on the 1970 and 1980 decades is a fascinating read. They celebrated how “economists entered the courtroom” through the dissemination of Gary Becker’s model of discrimination (p.321). Their paper notified the economics profession that “[i]n practice, the economists’ view has made considerable headway in the courts”, a view first thought as “irrelevant and hopelessly complex for legal minds” (p.323). In retrospect, the conquest of the courtrooms seemed easy. Was Justice Oliver Wendell Holmes’s prediction according to which in law “the man of the future [wa]s the man of statistics and the master of economics” (1897, p.469) a prophecy? Can one agree with Justice Brown, who noted in 1962 that concerning racial discrimination “statistics often tell much, and courts listen” (Alabama v. United States, 304 F. 2d. 583, p.586)? Wendell Holmes is also known for coining the metaphor of the “marketplace for ideas” (Mercuro & Medema 2006, p.1).

By the 1980s, as recorded in the New York Times, “apply[ing] […] microeconomic and computer techniques to a mountain of statistical data” was already an intense area of methodological disputes and a booming market. Several elements explain the rise of economists (among other social scientists) as expert witnesses in discrimination cases: changes in legislation and litigation doctrines, important decisions regarding quantified expertise and the evolution of economics itself: its corpus and object of inquiry, the type of tools in applied microeconomics and as well as the use of these tools outside academia.

Producing evidence of differential treatments has meant measuring (and trying to explain) groups’ differences in various outcomes––rates of promotion, hiring, admission, or wages. Decomposing these differences into legitimate and illegitimate factors is at the heart of what courts are looking for as evidence of discrimination. As a note published in the Harvard Law Journal advocated in 1975 (written by Tom Campbell), the economists’ tool-kit, based on the operationalization of human capital theory and developments in micro-econometrics estimation techniques, became seen as very useful in the production of such evidence by the legal profession. Starting in in the 1970s, this expertise amplified in the 1980s, and eventually became institutionalized through the creation of a subfield, forensic economics, with its own JEL Code (k13) in the late 1980s. Many economists who testified as expert witnesses do not identify as forensic economists, nor do they publish in this area. But the fact that a subfield is institutionalized in this period shows that the profession offers a specific institutional answer. While the techniques of applied micro have changed, the challenges of experts who use them in court still face some similar challenges as the one in the current Harvard case.

The first aspect in the Harvard case that resonates with the recent history of applied micro in courts is the discussion of the diverging choices made by the experts. The choice of variables, estimation techniques, underlying theoretical principles, and modelling strategies are usually closely scrutinized by the courts. Cross-examination seems sometimes harsher than most peer-review processes. In the present case, while using the same method and the same data––produced by Harvard University—Arcidiacono and Card followed contrasting path in terms of construction of their sample as well as modelling strategy.

Peter Arcidiacono testified for plaintiff Students for Fair Admissions. He excluded from his sample recruited athletes, the children of alumni, of Harvard faculty and staff members and students from the “Dean’s List” of donors (these individuals belong to the so-called “legacy applicants” category). In total, 7000 students out of the 150,000 students were excluded over the 6 years of admissions studied. The main argument for exclusion was the non-representative aspect of this group, as it displays very high rates of admission. Card specifically attacked Arcidiacono on this, arguing that although this group represents a small fraction of the applicants (5%), they account for a large proportion of accepted students (29%).

Besides this disagreement over sample characteristics, the two economists chose different modelling strategy. Card modelled Harvard’s admission as a multiple-factor process, based on the four categories that Admissions officer use: academic achievement, extracurricular activities, personal qualities, and athletic abilities. Harvard officers admit people not just based on grades and test scores, where Asian Americans over-performed all other groups, he argues, but on these four categories. Hence, the model tries to fit to Harvard’s definition of admission.

Arcidiacono did not include the variable Harvard called “personal ratings” as a control variable. He argues that this variable is tainted by potential discrimination: subjective ratings done by Admissions Officers are precisely the loci where SFFA suspects discriminatory practices against Asian Americans. In the past, “personality tests” and such concept as “leadership” and “character” has been used as discriminatory devises, especially against admission of Jews at Harvard.

This discussion parallels earlier debates about statistical analyses of employment discrimination. The early trials for racial discrimination were concerned with the discriminatory use of specific tests as devices use to bypass anti-discrimination legislations (see the history of the Griggs decision that established the disparate impact doctrine). Besides the use of tests, numerous legal cases include discussions of whether experts should include ratings of the employees’ work as a control variable for performance, when these ratings were done by the ones accused of discrimination. Context has changed but the question about “controlling for” remains central, as well as the questions of measurable variables available.

Since the late 1970s, this aspect, which sample and variables should be used, has been discussed to point possible reforms in terms of pre-trial agreements on dataset, sample, or even variables. Reforms suggested, for example in the 1989 “Panel on the evolving role of statistical assessments as evidence in the courts”, that agreement on some aspects of the analysis before expert witnesses’ deliver their testimony would produce analyses that are more comparable, and hence, easier for the judge to weight. The panel members also suggest courts should hired their own experts. Then and now, current practices amplified by the adversarial aspect of the US system make the judge the one in charge of valuing the modelling strategies themselves.

The court needs to understand the type of evidence and reasons offered by experts for their choice. The objective for the trier of facts is to be able to judge the accuracy as well as the relevance of the model but also to trace any value judgements embedded within quantitative analysis itself (as an early example, see the very long section on “the theory behind the parties’ mathematical modelling” in Vuyanich v. Republic National Bank, 505 F. Supp. 224, 1980). In the present case, the judge Allisson seemed to have been sceptical of Arcidiacono’s choice to exclude 5% of individuals from his sample. She asked how many Asian American were found in this particular sample, insinuating the exclusion would have the effect of overestimating discrimination.

Besides the content of testimonies, what is at stake in a trial is also the credibility of the expert. David Card opens his report with two pages of qualifications, organized along standard lines for a trial: education, list of publications, long list of awards and prestigious affiliations. The last paragraph mentioned his compensation rate (750$ per hour), his association with the Consultant firm Cornerstone Research, with the (crucial) precision that he will not receive contingent fees. The list of his last four years of testimony is in an appendix. By contrast with the one paragraph on qualification that Arcidiacono wrote, Card appeared well trained in expert witnessing: he signalled every element of objectivity based on institutional aspects, including transparency and prestige. Crucially, an expert’s credibility does not rely only on the scientific material he brings to the court.

While commentators are usually concerned with determining “who is right” (just as the debate on econtwitter), experts’ credibility is based on institutional aspects of science, not only logical consistency: these conventional “markers of objectivity”, what Jasanoff calls “the naïve sociology of science” of the courts, informed how qualified an expert is, for example, as illustrated by her publication log or prestigious affiliations.

Note that in the Harvard case, two amicus curiae briefs supporting each side were submitted by economists (other briefs include the entire group of Ivy League universities in support of Harvard). The amicus briefs signed by economists in support of Card and in support of Arcidiacono follow the same presentation: first the credentials of economists and then the discussion of the analysis itself. After listing the names of the numerous amici, the brief in favour of Card specified it includes “two Nobel Laureates”, the “former chair of the Fed”, “four former Chief Economists of federal agencies”, etc. The text specifies there is a diversity of opinion among them about the use of race-conscious admissions but that they all agree Card is qualified and “outstanding” as an expert. The amici add and that “the criticisms of his modelling approach in the [other Brief of Economists in Support of Plaintiff] are not based on sound statistical principles or practices” (p.4). By contrast, the brief amicus in support of Arcidiacono is signed by three economists—Fang, Lowry and Shum, the brief was written by Keane—and one paragraph is dedicated to factual credentials, mainly mentioning affiliation to main academic institutions.

The ability to communicate and the rhetoric of the expert were and are equally important in courts. As MIT economist Franklin Fisher puts it: economists need to go beyond the mere display of “econometrics [as] black magic” (Fisher 1986). Fisher, who testified in an important set of cases as an expert witness (and especially in the famous IBM case), produced a short text-book-style article in 1980 on how one should use “multiple regression analysis in legal proceedings”. In a later paper published in 1986, he refers to a long list of practical advice ranging from the need to allocate enough time to explain probability theory to the use of rhetorical examples. Fisher also urged experts to “protect [their] subjective honesty” by hiring research assistants to do the “data management”.

At one point during his testimony, Card “stood up and wrote on a whiteboard, while explaining his statistical methods, the courtroom got a lesson in ‘average marginal effects’ and the importance of multivariate models”. The journalist adds “That passed for legal drama on Tuesday”. By contrast, a week earlier, Arcidiacono “seemed relaxed on the stand, sitting back in his chair and gesturing with his hands” whereas “Card, leaning forward, answered questions precisely, rarely changing his tone of voice”. The way economists answer the questions as well as their body language are also part of their credibility. Judging expertise also mean judging experts themselves.

What followed the cross-examination of Acidiacono’s report was examination of elements such as the fees he received (450$ per hour, not mentioned in the report), the controversial reception of one of his papers on affirmative action, and the funding he received from the Searle Freedom Trust, a libertarian think-tank that also funded the plaintiffs, Students for Fair Admissions. Arcidiacono did not have access to Card’s report before his testimony: this follows logically from the fact he testified for the plaintiff who is supposed to make the case for discrimination. The settings of a trial, beyond the knowledge produced and the expert’s credibility, is the third important determinant in the issue at stake.

Card read Arcidiacono’s report and his report is an extremely precise and well-done rebuttal of its main thesis: that Asian-American’s highest scores by objective standards and their lower rates of admissions are symptoms of discriminatory behaviours by Harvard Admission Officers. He better modelled Harvard’s admission process, a process he is considering given in his analysis. By contrast, Arcidiacono’s modelling strategy aims to questions the process itself. Card had access to Arcidiacono’s report before he wrote his own.

An important element of the U.S. adversarial system is its inclination towards scepticism. Historically, experts’ testimonies are usually decisive in destroying the other expert’s analysis, rather than at establishing their own relevance. This is what Sheila Jasanoff (1992) has observed about expert witnessing in general (she takes the example of DNA tests): the adversarial process reinforces scepticism over every bit of evidence, rather than consensus-seeking. While many would wish to put the two teams together to work on a final piece, disagreement is organized by the settings of the court itself, and in a strategic manner. Cross-examination is about undermining the credibility of the adversary’s experts who are usually grilled by trained lawyers. These lawyers are completely aware of the methodology used, while not being micro-economists themselves. While contrary to what Daniel Rubinfeld once advocated in “Econometrics in the Courtroom”, there is no publication of alternative modelling strategies and sensitivity tests, it seems clear that the nature of court settings, e.g. cross-examination that happens in a specific order (the schedule and timing of testimonies are crucial), is well established in terms of rebuttal, in the Popperian sense of refutation.

That means it is not sufficient to be right, whatever this means. It does not mean that anything goes either. But it does underscore procedures and settings––in a word: context––matters a lot in the interpretation of the contingency of numbers produced by experts.

Two aspects of this can be underlined. First the absence of individual testimonies. The last question (reported in this article) asked by the defendant lawyer to plaintiff’s expert is to name a single member of Students for Fair Admissions who had been rejected by Harvard. The organization did not plan to call any Asian-American students who were rejected from Harvard to testify. The quantitative analysis thus became the central part of the claim, in the absence of flesh-and-blood plaintiffs. It echoes a famous case where the 8,000 pages of written reports produced by two economists were not enough in the absence of victims’ voices on the side of the plaintiff (EEOC v. Sears Roebuck, 1988).

Second, the centrality of statistical analysis. Examining quantitative analysis can take centre stage, compared to other testimonies, and especially individual testimonies. In some cases, it is the result of the nature of the trial (the structure of the case can be statistical in nature for example); it could also be a strategy to frame a trial as a “battle of experts”. One aspect of this battle is that expert witnesses’ testimonies are not decisive in themselves, but in the way they help to destroy the other party’s narrative, as well as in their ability to constitute “backfire”. Traditional strategies of this organized scepticism are based on what Collins calls the “experimenter’s regress”: There can always be scientific valid alternatives to one expert’s choices. It can also consist in increasing the number of causal factors to undermine the other expert’s causality claims (see e.g. Brandmayr’s analysis of L’Aquila trial).

Important differences between the 1970s and 1980s, and today, is the development of economics (and econometrics) training for the legal profession and the tremendous improvement of information systems on experts witnesses in particular, but also on cases and methods to be used. What is strikingly similar however is the way the social sciences are exposed in court. Legal settings reveal the social construction of sciences and how current practices are shaped by a variety of factors.

“The prevalent assumption was that scientific truth or consensus was always ‘out there’ for the law to find and that any failure to accomplish this goal was due to imperfections in the machinery of the law. Social studies of science pose a fundamental challenge to this relatively comfortable assessment. The difficulty of locating facts, truth, or consensus now seems to be embedded in the way science works.” (Jasanoff 1992, p. 356)

Social scientists then have to face the fact “the role they are asked to play” (Brandmayr 2017, p.348) can also have an important impact on the issue at stake, along with the scientific knowledge they produced.

The Harvard trial is, after all, about admissions policy and transparency about the criteria used and their measurability as well as objectivity. In that regard, what seems at stake is Harvard’s use of race in its admissions, as well as many other factors—e.g. the very existence of legacy applicants has been also under fire, though to a much lesser extent. Proving that Harvard discriminates against Asian Americans would constitute a case against race-conscious admissions, while the way Harvard actually uses race in his admissions is not agreed on. The trial is about the public discussion of Harvard’s standards of admission and actual practices. Written guidelines, apparently submitted by Harvard during the trial, are exactly what such trials are about: forcing an institution to uncover its practices. Harvard’s practices can be inferred from decisions as observed using quantitative data, but also by asking admission officers to justify their activities. Echoing an old methodological battle on the way choices are observed, observing the battle of economists in courts acts as a reminder that a trial is essentially an exercise in weighting the pluralism of evidence.